ML and DL Research Bootcamp

Work on impactful ML/DL research. Present at top-tier conferences. Publish impactful research papers. Build neural networks from scratch using Python, NumPy, and scikit-learn.

Instructors from

Hear From Dr. Sreedath Panat(MIT PhD)

The Foundations of ML and Deep Learning

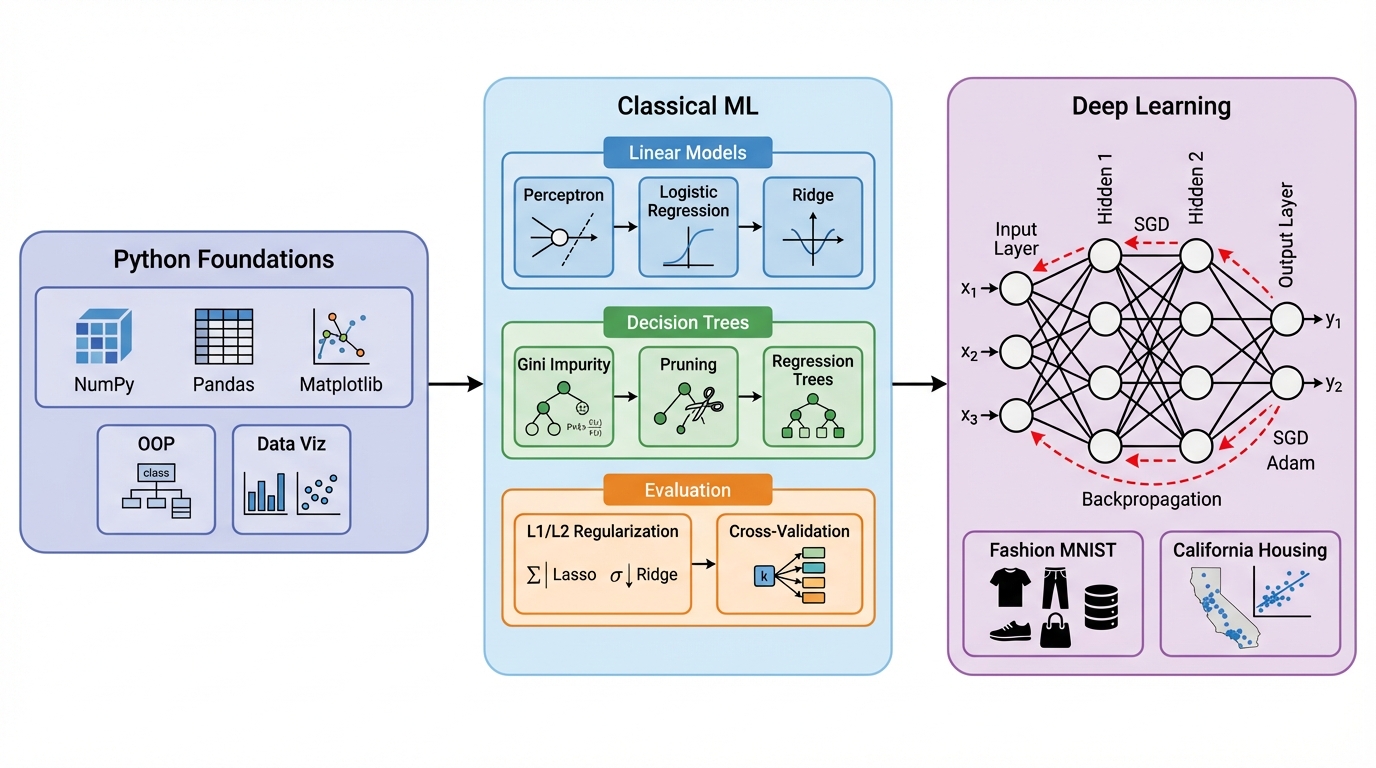

We teach three interconnected objectives that take you from Python fundamentals to building and training neural networks from scratch. Each builds on the previous, creating a rigorous foundation for ML research.

Python Foundations for ML

Start with Python fundamentals tailored for machine learning: variables, data types, matrix multiplication from scratch, object-oriented programming, and data visualization with Matplotlib, Seaborn, and Plotly. Build a solid coding foundation using NumPy and Pandas.

Classical Machine Learning

Master the core algorithms that power modern ML: linear classifiers, the perceptron, logistic regression with cross-entropy loss, gradient descent optimization, L1/L2 regularization, and decision trees with Gini impurity. Build every algorithm from scratch before using scikit-learn.

Neural Networks from Scratch

Build neural networks layer by layer using only NumPy: code neurons, forward passes, activation functions, cross-entropy loss, and full backpropagation. Master optimizers (SGD, RMSProp, Adam), regularization (dropout, K-fold CV), and train on real datasets like MNIST Fashion and California Housing.

Optimization and Training

Understand gradient descent at a deep mathematical level: the chain rule, matrix gradients, and how weights are updated during backpropagation. Learn to diagnose overfitting, apply regularization strategies, and build complete training pipelines.

Hands-on Projects and Interviews

Each module includes hands-on projects and interview-oriented recaps. Build classifiers, regression models, decision trees, and neural networks on real datasets. The bootcamp is designed to prepare you for both research and industry ML roles.

Research and Publication

Work on industry-level ML/DL research projects aimed at publication. Learn to formulate research problems, design experiments, validate hypotheses, and write scientific papers for conferences and journals.

How Machine Learning and Deep Learning Work

Publication-quality diagrams illustrating the core algorithms and architectures you will master in this bootcamp.

The ML-DL Landscape

A high-level overview of the bootcamp curriculum: from Python foundations and data visualization, through classical ML algorithms (regression, decision trees), to building neural networks from scratch with backpropagation and optimizers.

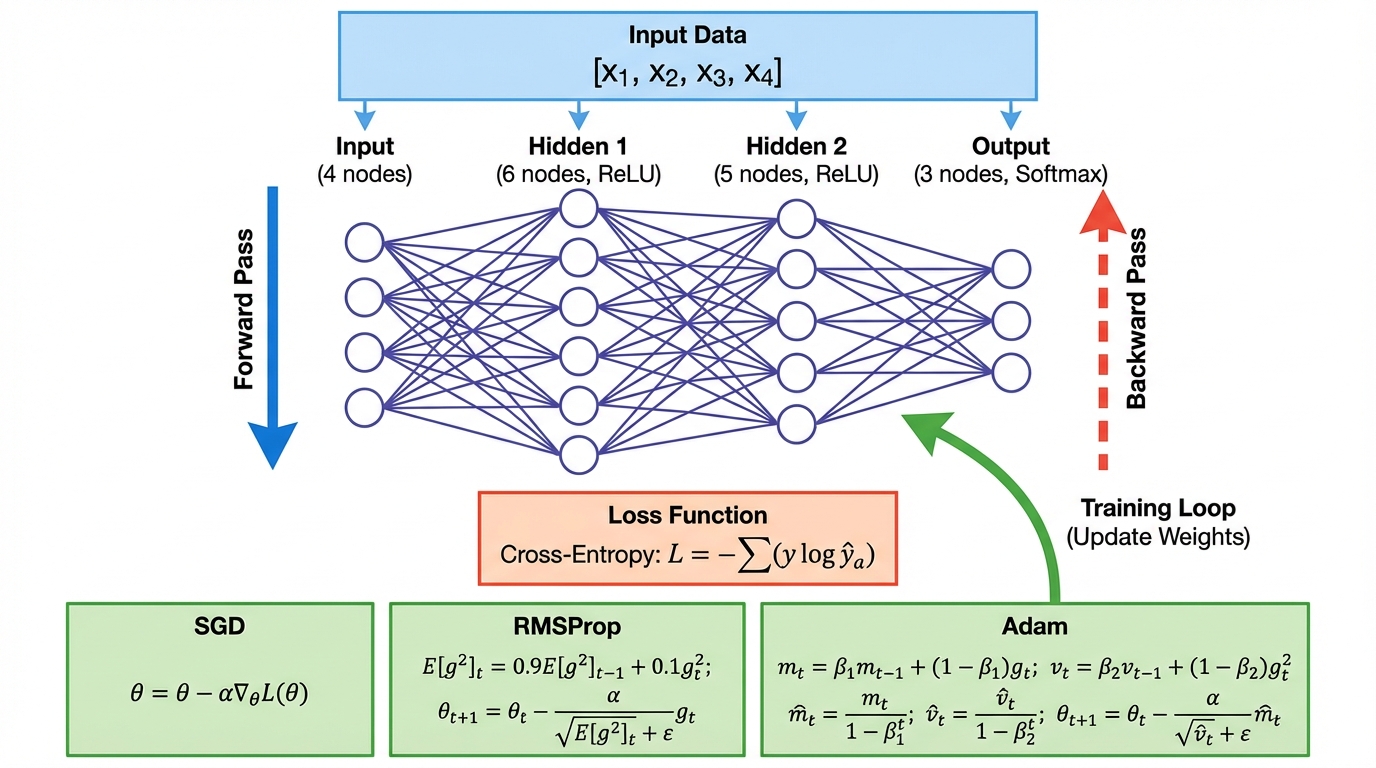

Neural Network Training Pipeline

The complete training loop: forward pass through multiple layers with activation functions, cross-entropy loss computation, backward pass with gradient propagation via the chain rule, and weight updates using SGD, RMSProp, or Adam optimizers.

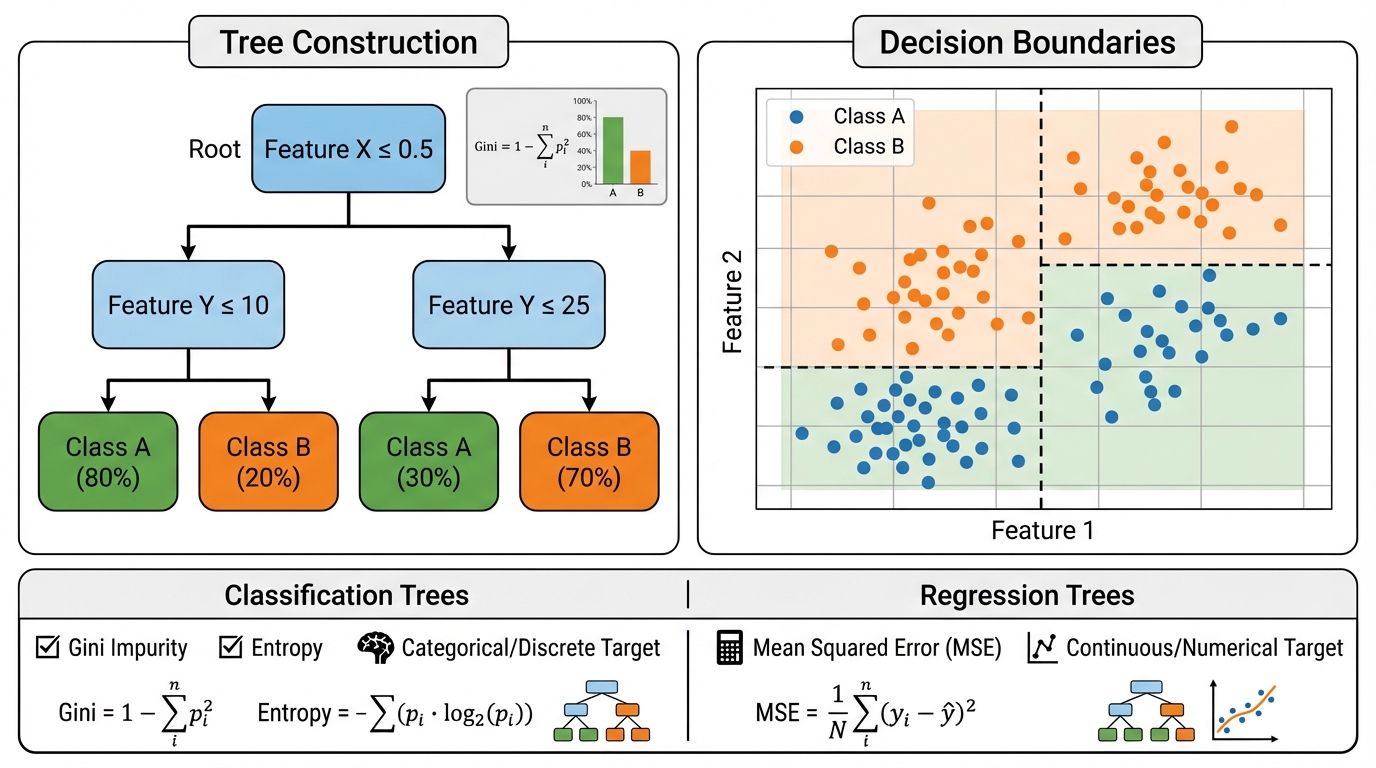

Decision Tree Construction

Decision tree construction using Gini impurity for feature selection, recursive binary splitting, pruning strategies, and the resulting rectangular decision boundaries for classification and regression tasks.

Designed for Beginners, Engineers, and Researchers

Whether you are new to programming or an experienced developer looking to build a rigorous ML foundation, this bootcamp teaches you to implement every algorithm from scratch before using libraries.

Students and Beginners

Undergraduate and graduate students who want a rigorous, ground-up understanding of machine learning and deep learning. No prior ML experience required: we start from Python basics.

Software Engineers

Developers looking to transition into ML/AI roles. Build every algorithm from scratch before using frameworks, giving you the deep understanding that separates ML engineers from API callers.

Data Scientists

Analysts and data practitioners who use ML libraries but want to understand what happens under the hood. Master the mathematics and implementation behind regression, trees, and neural networks.

Aspiring Researchers

Students aiming for graduate programs or research careers in AI. The research project component and publication pathway strengthen applications to top PhD programs.

A Guided Journey from Python to Neural Networks

30 topics across 6 weeks, covering Python foundations, machine learning algorithms, and deep learning from scratch. Phase 1 (Weeks 1 through 6) is entirely self-paced: all lectures are pre-recorded and available for lifetime access, so you learn at your own speed.

Python Basics, Variables, Data Types

- Python fundamentals for machine learning: variables, data types, control flow, and functions

Matrix Multiplication from Scratch

- Implementing matrix multiplication using pure Python, understanding computational foundations of ML

Classes and Objects in ML

- Object-oriented programming patterns used in ML codebases: classes, inheritance, and encapsulation

Intro to NumPy and Pandas

- NumPy arrays, vectorized operations, broadcasting, and Pandas DataFrames for data loading and exploration

Data Visualization: Matplotlib, Seaborn, Plotly

- Creating publication-quality visualizations: line plots, histograms, scatter plots, heatmaps, and interactive charts

What is ML, Types of ML Models

- Supervised, unsupervised, and reinforcement learning paradigms, with real-world examples of each

The 6 Steps of an ML Project

- End-to-end ML workflow: data collection, preprocessing, feature engineering, model selection, training, and evaluation

Linear Classifiers and the Perceptron

- Decision boundaries, the perceptron algorithm, convergence theorem, and limitations of linear classifiers

NumPy, Scikit-learn, Jupyter

- Setting up the ML development environment: Jupyter notebooks, scikit-learn API patterns, and NumPy for computation

Build Random Linear Classifier

- Building a random linear classifier from scratch, understanding decision boundaries and classification accuracy

Logistic Regression Intuition and Coding

- Sigmoid function, probability interpretation, decision boundaries, and implementing logistic regression from scratch

Cross Entropy Loss and Gradient Descent

- Deriving cross-entropy loss, gradient computation, learning rate selection, and convergence analysis

Regularization: L1/L2

- Lasso (L1) and Ridge (L2) regularization: mathematical formulation, effect on weights, and preventing overfitting

Linear and Ridge Regression

- Ordinary least squares, closed-form solution, Ridge regression with regularization, and model evaluation metrics

Interview-Oriented Recap

- Comprehensive review of regression concepts with ML interview-style questions and problem-solving strategies

Gini Impurity and Tree Construction

- Gini impurity measure, information gain, recursive tree construction, and feature selection for splits

Pruning Trees, Full Code Walkthrough

- Pre-pruning and post-pruning strategies, cost-complexity pruning, and complete decision tree implementation

Regression Trees with Multiple Features

- Extending decision trees to regression problems, handling continuous features, and multi-feature splitting

Hands-on: Build Regression and Classification Trees

- End-to-end project building both regression and classification trees on real-world datasets

Interview Prep: Trees Summary

- Decision tree interview questions, comparison with other algorithms, and when to use trees vs. other methods

Coding Neurons and Layers using NumPy

- Implementing single neurons, dense layers, and multi-layer architectures using only NumPy arrays

Forward Pass, Activation Functions

- Computing forward passes through layers, ReLU, sigmoid, tanh, and softmax activation functions

Loss Functions: Cross Entropy

- Binary and categorical cross-entropy, MSE loss, and choosing the right loss function for your problem

Backpropagation (Single Neuron + Full Layer)

- Deriving backpropagation for a single neuron, extending to full layers, and computing weight gradients

Chain Rule and Matrix Gradients

- The chain rule for composite functions, Jacobian matrices, and efficient matrix gradient computation for deep networks

Complete Backprop Pipeline

- Assembling the full training loop: forward pass, loss computation, backward pass, and weight update in a unified pipeline

Optimizers: GD, RMSProp, Adam

- Vanilla gradient descent, momentum, RMSProp adaptive learning rates, and Adam optimizer implementation

Overfitting: Dropout, Regularization, K-fold CV

- Diagnosing overfitting with learning curves, dropout regularization, weight decay, and K-fold cross-validation

Projects: MNIST Fashion + California Housing

- End-to-end classification on Fashion MNIST using your neural network from scratch

- Regression on the California Housing dataset with your custom training pipeline

- Model evaluation, hyperparameter tuning, and results visualization

Final Recap: Neural Network in 100 Minutes

- Comprehensive review: from a single neuron to a full deep network

- Key concepts consolidated: forward pass, loss, backprop, optimizers, regularization

- Interview preparation and next steps for advanced deep learning

Research-Grade Deliverables

Everything you need to go from ML beginner to building neural networks from scratch and publishing research.

Complete Python Codebase

Production-ready Python code for every session, including from-scratch implementations of every algorithm: regression, decision trees, and neural networks.

- All lecture code files and Jupyter notebooks

- Homework assignments with solutions

- Research project starter templates

- Fully documented ML pipelines

Lecture Notes and Videos

Lifetime access to all session recordings and comprehensive lecture notes covering every ML and DL concept from Python basics to neural networks.

- HD video recordings of all sessions

- Detailed lecture notes in PDF format

- Annotated code walkthroughs

- Reference material and reading lists

Research Project Portfolio

Industry-level ML/DL projects including neural network classifiers, regression models, and decision tree systems ready for your portfolio or publication.

- Neural network trained on Fashion MNIST

- Regression pipeline for California Housing

- Decision tree classifier implementation

- Publication-ready research results

Community and Mentorship

Join the Vizuara ML-DL community on Discord for ongoing collaboration, doubt clearance, and research partnerships.

- Discord community access

- Student collaboration opportunities

- Assignment checking and doubt clearance

- Free access to all ML webinars

Learn from MIT and Purdue AI PhDs

Our instructors are co-founders of Vizuara AI Labs and published researchers in Machine Learning and Deep Learning, with expertise spanning neural networks, optimization, and applied ML.

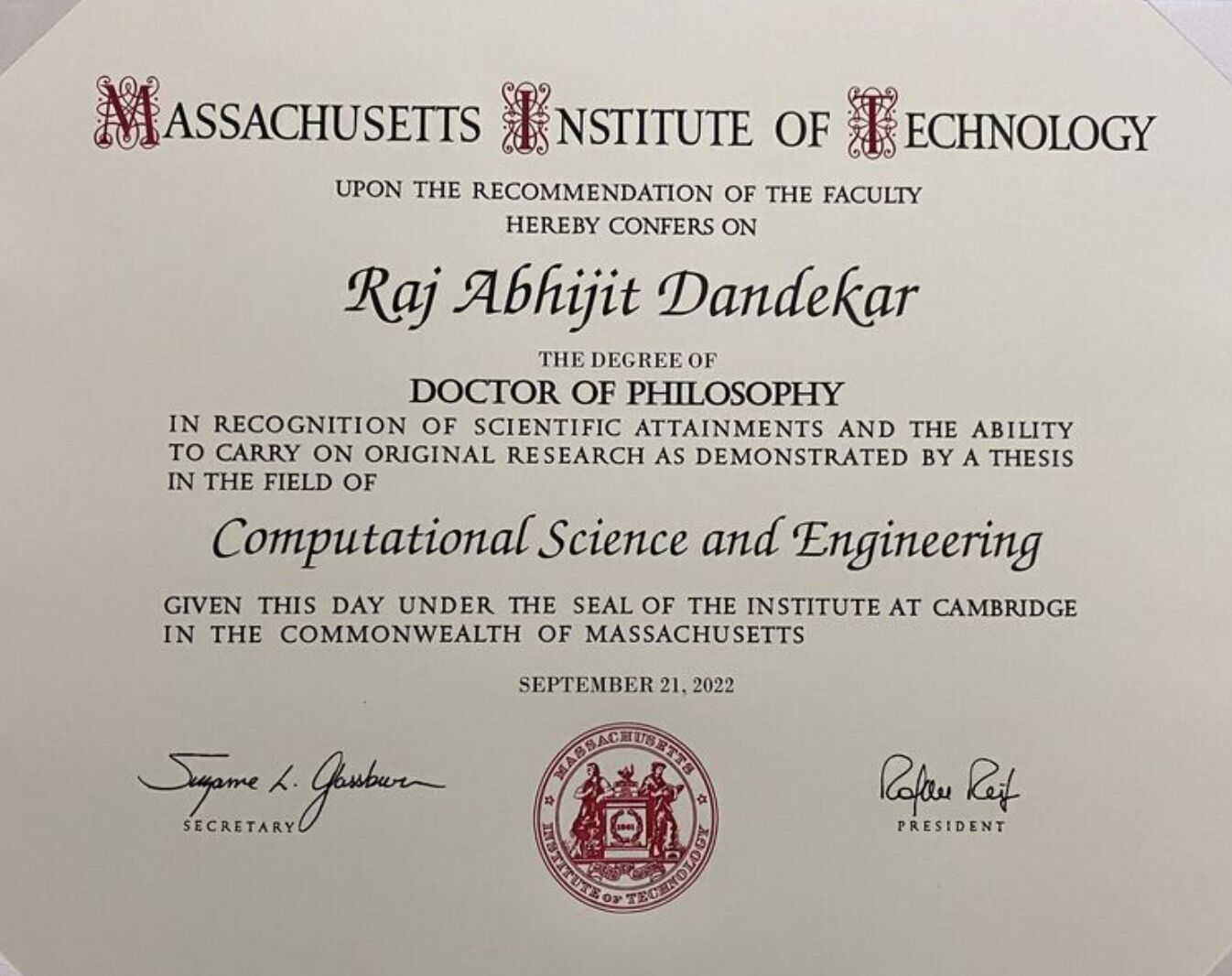

Dr. Sreedath Panat

Co-founder, Vizuara AI Labs

PhD from MIT, B.Tech from IIT Madras. 10+ years of research experience. Dr. Panat brings deep technical expertise from both academia and industry to make complex AI concepts accessible and practical.

Dr. Raj Dandekar

Co-founder, Vizuara AI Labs

PhD from MIT, B.Tech from IIT Madras. Dr. Raj specializes in building LLMs from scratch, including DeepSeek-style architectures. His expertise spans AI agents, scientific machine learning, and end-to-end model development.

Dr. Rajat Dandekar

Co-founder, Vizuara AI Labs

PhD from Purdue University, B.Tech and M.Tech from IIT Madras. Dr. Rajat brings deep expertise in reinforcement learning and reasoning models, focusing on advanced AI techniques for real-world applications.

Manning #1 Best-Seller

Build a DeepSeek Model (From Scratch)

By Dr. Raj Dandekar, Dr. Rajat Dandekar, Dr. Sreedath Panat & Naman Dwivedi

Learn from MIT PhD Researchers

Our lead instructor Dr. Sreedath Panat holds a PhD from MIT, where he conducted research in applied AI and scientific computing. Our team brings deep expertise in machine learning, neural networks, and applied AI research.

Sample Papers From Our Research

A selected few papers from our research over the past years. Students in the Industry Professional plan work on similar projects aimed at publication.

Enroll in the Bootcamp

Choose the plan that matches your goals, from self-paced learning to intensive research mentorship with MIT PhDs.

Researcher Plan

Save 24%. Originally Rs 1,25,000. MIT and Purdue PhDs as your research mentors.

- Lifetime access to all videos, code files, and homework assignments

- Access to bootcamp community on Discord

- Assignment checking and doubt clearance

- Free access to all ML webinars throughout the year

- Access to open list of research problems in ML/DL

- 4-month personalized guidance in doing research

- Publishing the research in conferences/journals

- How ML and DL can be applied to real-world industries

Frequently Asked Questions

Everything you need to know about the ML-DL Research Bootcamp.

Ready to Master Machine Learning ?

Join hundreds of students and engineers who have built neural networks from scratch and launched ML research careers. Start building every algorithm from the ground up.